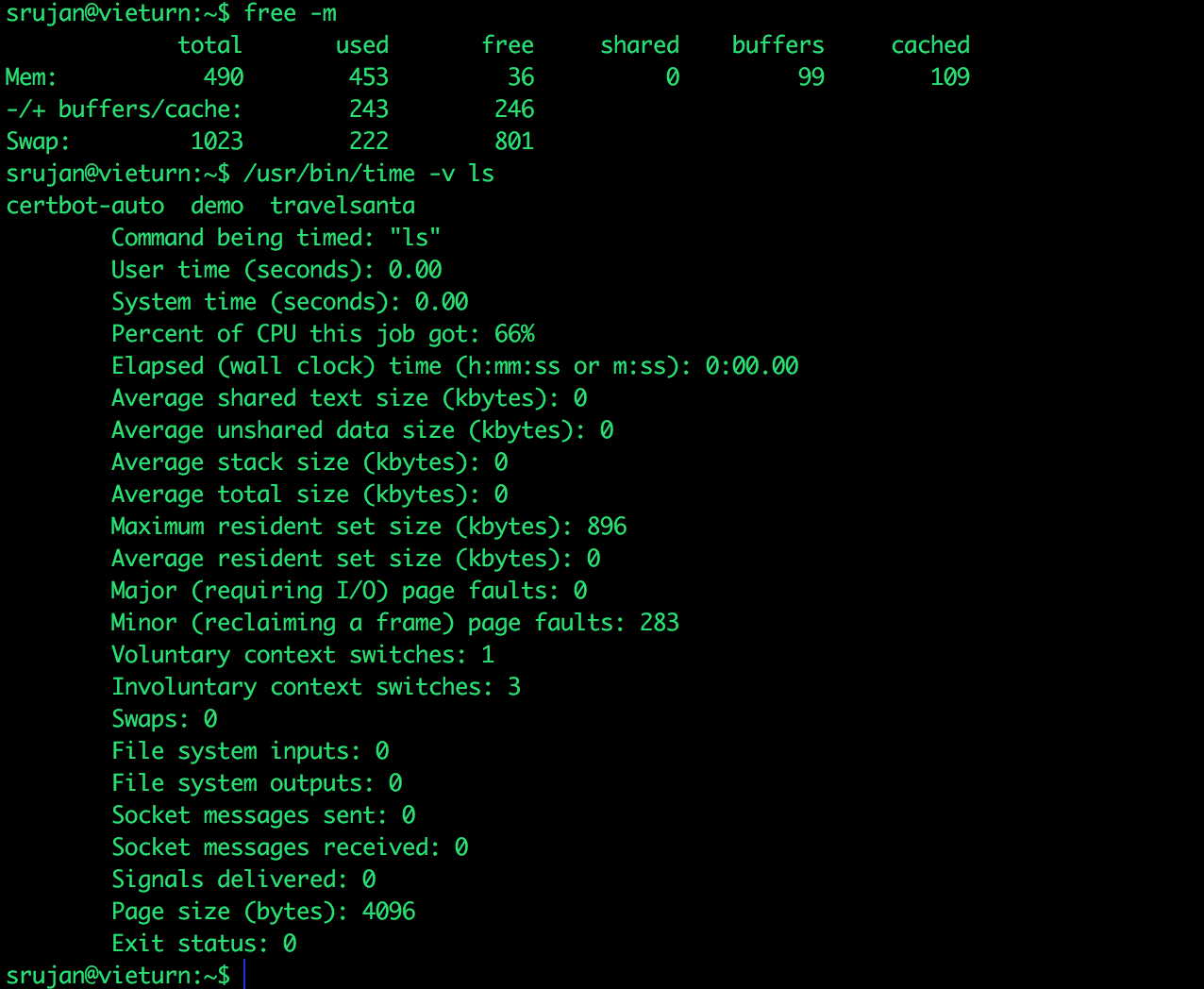

My RAM :O :(

At it's core, Linux has a simple philosophy, "Everything is a file"

Linux communicates with everything including Block devices, Serial devices, Network devices as if they're files (same as one'd open and close files).

- Block devices (eg.hard disks) cater to use cases where data can be read randomly, with a fixed block size. This is largely the use case of Operating system itself, where it asks for files randomly.

- Serial devices (eg.keyboard) on the other hand, lets access serially. The input order is maintained when it's read.

Input/Output operations on Block devices work on "elevator algorithm". If elevator is going upwards, it'll not accept any downward requests until it reaches top floor.

Hard disks are generally slow, compared to data input/output on RAM. Applications has to wait for I/O requests to complete, as different applications are in queue for operations to complete.

Disk I/O is the slowest part in Linux. By nature, time taken to complete I/O is higher on hard disk than CPU and RAM.

If a process does a lot of read/write operatins on the disk, there might be an intense lag or slow response from other process because they are all waiting for respective I/O operations to get completed.

How linux handles this?

free -m and /usr/bi/time -v ls

How much RAM is free?

There are three "rows" in the output. "Mem:","-/+ buffers/cache", and "swap".

As per first line, we have 36mb of memory free.

But in reality I have 246MB memory free from the total of 490MB

(from the second row of the output"-/+ buffers/cache").

Why is first line showing incorrect?

Linux operating system, breaks I/O into pages and the default is 4096 bytes (might vary for few distributions). It reads/writes blocks to/out of memory(RAM) with 4096 bytes page size.

Second last item on previous page says our page is 4096

When a process starts, the processor looks memory pages (blocks stored in CPU cache), and then RAM for the data.

If data is not present here, it will ask hard disk for the data blocks. This is called as Major Page Fault. It means fetch pages from hard disk and store them in RAM

If data is present in RAM buffer cache, the processor causes a Minor Page fault. It is faster to fetch data pages from buffer cache.

Major Page fault will take time because it is an I/O request to the disk drive. Minor page fault is high every time, as almost all data is accessed only after they are in RAM buffer cache.

So, an application when started for the first time will take a little bit more time to execute because of the "Major Page Fault".

To reduce the "Major Page faults", the kernel tries to buffer cache as much blocks as possible, so that it can be retrieved very fast, the next time.

The first line from "free" command, is correct in its first row of output also (because a large amount of RAM is used for this buffer caching done by the kernel)

It's not incorrect.

Alas! Kernel is stealing my memory!!

No, Linux gives priority to user processes. Kernel will remove it's pages when your process needs more memory.